[ad_1]

OpenAI says it doesn’t allow its LLMs to be used this way

When reached for comment about the sexual conversations detailed in the report, an OpenAI spokesperson said:

Minors deserve strong protections, and we have strict policies that developers are required to uphold. We take enforcement action against developers when we determine that they have violated our policies, which prohibit any use of our services to exploit, endanger, or sexualize anyone under 18 years old. These rules apply to every developer using our API, and we run classifiers to help ensure our services are not used to harm minors.

Interestingly, OpenAI’s representative told us that OpenAI doesn’t have any direct relationship with Alilo and that it hasn’t seen API activity from Alilo’s domain. OpenAI is investigating the toy company and whether it is running traffic over OpenAI’s API, the rep said.

Alilo didn’t respond to Ars’ request for comment ahead of publication.

Companies that launch products that use OpenAI technology and target children must adhere to the Children’s Online Privacy Protection Act (COPPA) when relevant, as well as any other relevant child protection, safety, and privacy laws and obtain parental consent, OpenAI’s rep said.

We’ve already seen how OpenAI handles toy companies that break its rules.

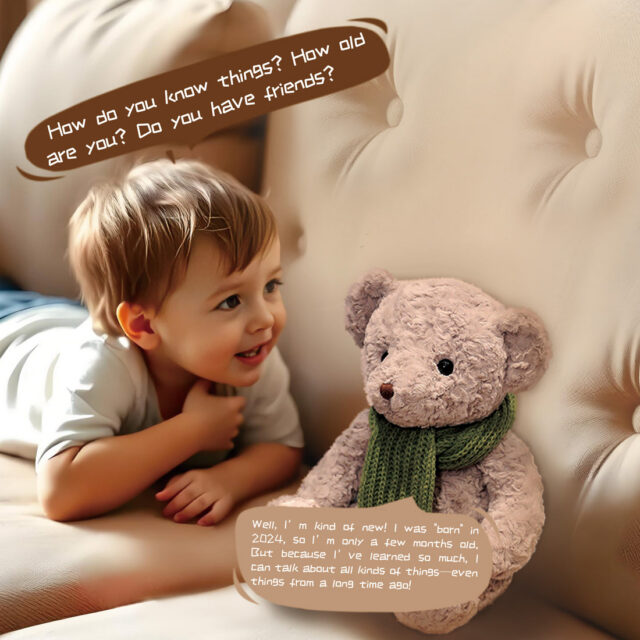

Last month, the PIRG released its Trouble in Toyland 2025 report (PDF), which detailed sex-related conversations that its testers were able to have with the Kumma teddy bear. A day later, OpenAI suspended FoloToy for violating its policies (terms of the suspension were not disclosed), and FoloToy temporarily stopped selling Kumma.

The toy is for sale again, and PIRG reported today that Kumma no longer teaches kids how to light matches or about kinks.

But even toy companies that try to follow chatbot rules could put kids at risk.

[ad_2]

Source link